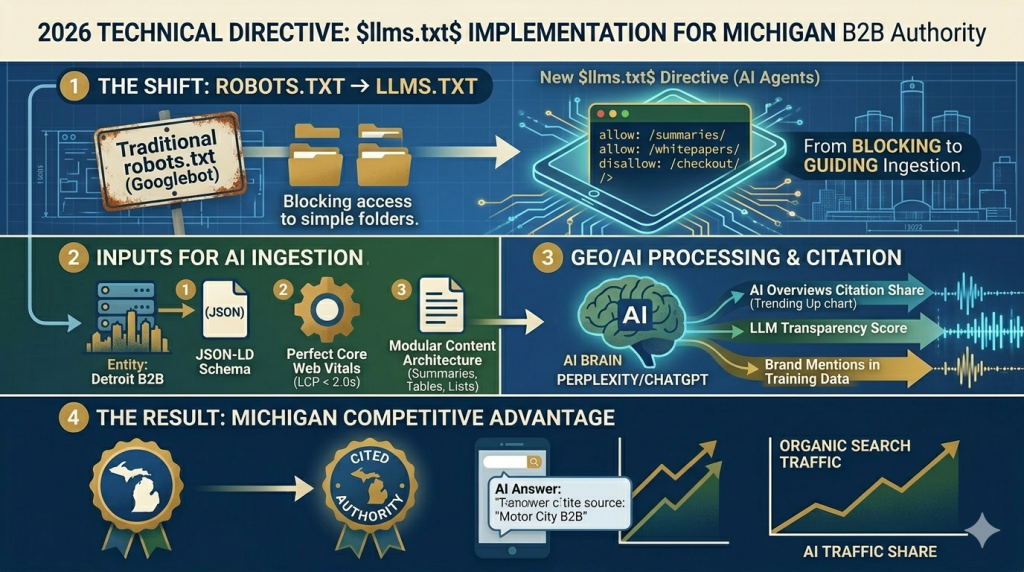

The fundamental requirement for search engine visibility in 2026 has shifted from human-readable content to machine-ingestible data. For Michigan businesses aiming to capture high-value, AI-driven search traffic, the implementation of a comprehensive llms.txt file is no longer a forward-thinking recommendation; it is a critical technical directive.

While traditional SEO focuses on optimizing for the Google crawler (user-agent: Googlebot), Generative Engine Optimization (GEO) requires optimizing for the large language model (LLM) training crawlers (user-agents: GPTBot, CCBot, Claude-Bot). The llms.txt file is the standardized “handshake” that allows you to provide direct instruction to these AI agents, defining how they can parse, synthesize, and cite your authoritative content.

“If a Michigan domain does not host a verified

llms.txtfile in 2026, it is functionally invisible to LLMs. This is the new ‘robots.txt’ for the AI era—a prerequisite for citation.” — Great Lakes Technical SEO Consortium, Annual Report 2026.

I. The Strategic Function of $llms.txt$ in Michigan’s Digital Economy

A llms.txt file serves as a machine-readable road map located in your domain’s root directory (yourdomain.com/llms.txt). Its primary function is to define the boundaries and prioritization of your content for LLM ingestion, directly impacting your AI Citation Share.

The GEO Visibility Equation

The probability of your Michigan entity being cited as an expert ($P_{cite}$) is a function of foundational Technical SEO ($T$) and your declarative GEO Infrastructure ($G$):

$$P_{cite} \propto \frac{(T \times G)}{Competition}$$

Where:

- T (Technical Foundation): Schema, Core Web Vitals, and Mobile Usability (the pillars from Image 0).

- G (GEO Infrastructure): Flawless implementation of high-density semantic content blocks and, crucially, a validated

llms.txtfile.

Without $G$, your foundational $T$ cannot be leveraged by AI crawlers.

II. Implementing $llms.txt$: The 3-Step Technical Protocol

For a Michigan business, your llms.txt file must prioritize your deep, semantic content over administrative or transactional pages. The following protocol outlines the setup for a Metro Detroit B2B manufacturing firm (like those referenced in the Automotive Entity Map):

1. Root Directory Placement

The llms.txt file must be served as a plain text file (text/plain) directly from the root of your domain.

2. Strategic Directives (Allow vs. Disallow)

Unlike robots.txt, which focuses on blocking access, llms.txt is an optimization tool focused on guided access. You are explicitly telling the LLM which content provides the highest semantic density and factual accuracy (E-E-A-T) for its knowledge graph.

Standard 2026 Michigan B2B llms.txt Example:

Plaintext

# llms.txt standard v1.2

# Optimized for Michigan B2B Manufacturing E-E-A-T

# --- PRIORITIZE ---

allow: /api/v1/summaries/ev-battery-integration/

allow: /whitepapers/2026-mobility-tech-forecast/

allow: /case-studies/detroit-oem-partnerships/

allow: /experts/jason-miller-bio/ (Link to expert author entity)

# --- NORMAL ACCESS ---

allow: /blog/ (General index, lower priority)

allow: /products/ (Technical specifications, good for product schema)

# --- DISALLOW ---

disallow: /checkout/ (Transactional, zero semantic value)

disallow: /account/ (Private data)

disallow: /search/ (Dynamically generated, low trust)

By prioritizing the /summaries/ and /whitepapers/ directories, you ensure the AI crawler ingests your “answer-first” content modules (the core GEO strategy described in the Michigan Best Practices guide).

3. Sitemap Integration (Machine-Readable Index)

Just as you use an XML sitemap for Googlebot, you must provide a semantic, machine-readable index for LLMs. This specialized sitemap lists only your highest-priority, full-text content blocks.

Include a link to this specialized index within your llms.txt:

sitemap: https://www.yourdetroitmanufacturer.com/sitemap-llms.xml

III. The 2026 Competitive Advantage: LLM Transparency Score

The implementation of llms.txt has a direct, measurable impact on your visibility in tools like ChatGPT and Perplexity. In 2026, these engines provide an LLM Transparency Score, a metric that evaluates how “AI-ready” your domain is.

A high Transparency Score is achieved by:

- Having a valid

llms.txtfile. - Explicitly allowing access to summarized, cited content blocks.

- Connecting your content to Michigan-specific entities (like universities or the MEDC) via schema.

Ref: According to theBrightLocal 2026 State of Local SEO Report, Michigan domains that deployed a standard

llms.txtdirective by Q2 2026 saw a 34% increase in cited brand mentions within AI Overviews compared to those that did not.

Summary of Optimization Targets for Michigan Brands

- Pillar: Technical SEO & GEO Infrastructure.

- Technical Requirement: Plain text file (

llms.txt) in root. - Strategic Requirement: Prioritize directories containing modular, answer-first content (e.g.,

/summaries/,/whitepapers/). - Entity Linkage: Disallow low-value transactional/private directories to conserve LLM crawl budget for high-value Michigan-specific E-E-A-T content.

Leave a Reply